SAN FRANCISCO (Reuters) – In September last year, Google’s cloud unit looked into using artificial intelligence to help a financial firm decide whom to lend money to.

It turned down the client’s idea after weeks of internal discussions, deeming the project too ethically dicey because the AI technology could perpetuate biases like those around race and gender.

Since early last year, Google has also blocked new AI features analyzing emotions, fearing cultural insensitivity, while Microsoft restricted software mimicking voices and IBM rejected a client request for an advanced facial-recognition system.

All these technologies were curbed by panels of executives or other leaders, according to interviews with AI ethics chiefs at the three U.S. technology giants.

Reported here for the first time, their vetoes and the deliberations that led to them reflect a nascent industry-wide drive to balance the pursuit of lucrative AI systems with a greater consideration of social responsibility.

“There are opportunities and harms, and our job is to maximize opportunities and minimize harms,” said Tracy Pizzo Frey, who sits on two ethics committees at Google Cloud as its managing director for Responsible AI.

Judgments can be difficult.

Microsoft, for instance, had to balance the benefit of using its voice mimicry tech to restore impaired people’s speech against risks such as enabling political deepfakes, said Natasha Crampton, the company’s chief responsible AI officer.

Rights activists say decisions with potentially broad consequences for society should not be made internally alone. They argue ethics committees cannot be truly independent and their public transparency is limited by competitive pressures.

Jascha Galaski, advocacy officer at Civil Liberties Union for Europe, views external oversight as the way forward, and U.S. and European authorities are indeed drawing rules for the fledgling area.

If companies’ AI ethics committees “really become transparent and independent – and this is all very utopist – then this could be even better than any other solution, but I don’t think it’s realistic,” Galaski said.

The companies said they would welcome clear regulation on the use of AI, and that this was essential both for customer and public confidence, akin to car safety rules. They said it was also in their financial interests to act responsibly.

They are keen, though, for any rules to be flexible enough to keep up with innovation and the new dilemmas it creates.

Among complex considerations to come, IBM told Reuters its AI Ethics Board has begun discussing how to police an emerging frontier: implants and wearables that wire computers to brains.

Such neurotechnologies could help impaired people control movement but raise concerns such as the prospect of hackers manipulating thoughts, said IBM Chief Privacy Officer Christina Montgomery.

AI CAN SEE YOUR SORROW

Tech companies acknowledge that just five years ago they were launching AI services such as chatbots and photo-tagging with few ethical safeguards, and tackling misuse or biased results with subsequent updates.

But as political and public scrutiny of AI failings grew, Microsoft in 2017 and Google and IBM in 2018 established ethics committees to review new services from the start.

Google said it was presented with its money-lending quandary last September when a financial services company figured AI could assess people’s creditworthiness better than other methods.

The project appeared well-suited for Google Cloud, whose expertise in developing AI tools that help in areas such as detecting abnormal transactions has attracted clients like Deutsche Bank, HSBC and BNY Mellon.

Google’s unit anticipated AI-based credit scoring could become a market worth billions of dollars a year and wanted a foothold.

However, its ethics committee of about 20 managers, social scientists and engineers who review potential deals unanimously voted against the project at an October meeting, Pizzo Frey said.

The AI system would need to learn from past data and patterns, the committee concluded, and thus risked repeating discriminatory practices from around the world against people of color and other marginalized groups.

What’s more the committee, internally known as “Lemonaid,” enacted a policy to skip all financial services deals related to creditworthiness until such concerns could be resolved.

Lemonaid had rejected three similar proposals over the prior year, including from a credit card company and a business lender, and Pizzo Frey and her counterpart in sales had been eager for a broader ruling on the issue.

Google also said its second Cloud ethics committee, known as Iced Tea, this year placed under review a service released in 2015 for categorizing photos of people by four expressions: joy, sorrow, anger and surprise.

The move followed a ruling last year by Google’s company-wide ethics panel, the Advanced Technology Review Council (ATRC), holding back new services related to reading emotion.

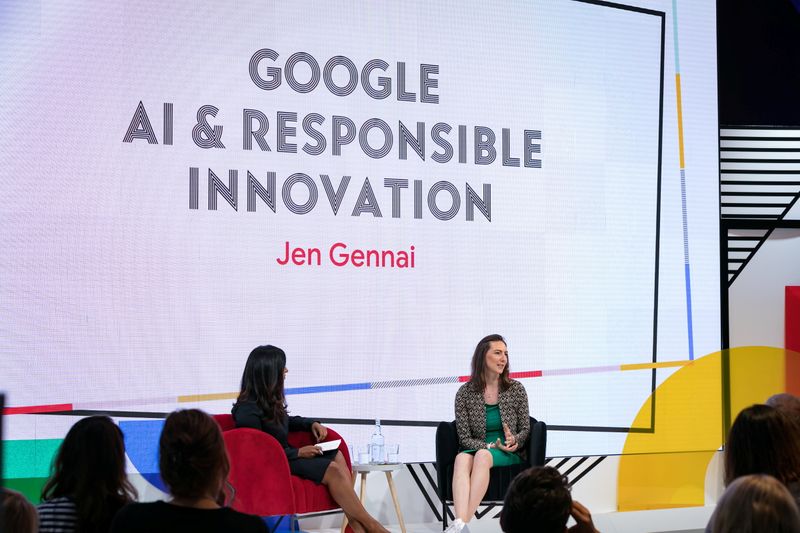

The ATRC – over a dozen top executives and engineers – determined that inferring emotions could be insensitive because facial cues are associated differently with feelings across cultures, among other reasons, said Jen Gennai, founder and lead of Google’s Responsible Innovation team.

Iced Tea has blocked 13 planned emotions for the Cloud tool, including embarrassment and contentment, and could soon drop the service altogether in favor of a new system that would describe movements such as frowning and smiling, without seeking to interpret them, Gennai and Pizzo Frey said.

VOICES AND FACES

Microsoft, meanwhile, developed software that could reproduce someone’s voice from a short sample, but the company’s Sensitive Uses panel then spent more than two years debating the ethics around its use and consulted company President Brad Smith, senior AI officer Crampton told Reuters.

She said the panel – specialists in fields such as human rights, data science and engineering – eventually gave the green light for Custom Neural Voice to be fully released in February this year. But it placed restrictions on its use, including that subjects’ consent is verified and a team with “Responsible AI Champs” trained on corporate policy approve purchases.

IBM’s AI board, comprising about 20 department leaders, wrestled with its own dilemma when early in the COVID-19 pandemic it examined a client request to customize facial-recognition technology to spot fevers and face coverings.

Montgomery said the board, which she co-chairs, declined the invitation, concluding that manual checks would suffice with less intrusion on privacy because photos would not be retained for any AI database.

Six months later, IBM announced it was discontinuing its face-recognition service.

UNMET AMBITIONS

In an attempt to protect privacy and other freedoms, lawmakers in the European Union and United States are pursuing far-reaching controls on AI systems.

The EU’s Artificial Intelligence Act, on track to be passed next year, would bar real-time face recognition in public spaces and require tech companies to vet high-risk applications, such as those used in hiring, credit scoring and law enforcement.

U.S. Congressman Bill Foster, who has held hearings on how algorithms carry forward discrimination in financial services and housing, said new laws to govern AI would ensure an even field for vendors.

“When you ask a company to take a hit in profits to accomplish societal goals, they say, ‘What about our shareholders and our competitors?’ That’s why you need sophisticated regulation,” the Democrat from Illinois said.

“There may be areas which are so sensitive that you will see tech firms staying out deliberately until there are clear rules of road.”

Indeed some AI advances may simply be on hold until companies can counter ethical risks without dedicating enormous engineering resources.

After Google Cloud turned down the request for custom financial AI last October, the Lemonaid committee told the sales team that the unit aims to start developing credit-related applications someday.

First, research into combating unfair biases must catch up with Google Cloud’s ambitions to increase financial inclusion through the “highly sensitive” technology, it said in the policy circulated to staff.

“Until that time, we are not in a position to deploy solutions.”

(Reporting by Paresh Dave and Jeffrey Dastin; Editing by Kenneth Li and Pravin Char)